Leveraging evidence to achieve gender equality in and through transforming food systems

Photo: E. van de Grift/CCAFS.

Photo: E. van de Grift/CCAFS.

2030 will be a landmark year in global development if the Sustainable Development Goals (SDGs) are successfully achieved. For gender equality in agri-food systems to come true by the end of this decade, we will need wide scale gender-transformative efforts based on rigorous evidence and high-quality science.

Presently, gender research suffers from a glaring lack of evidence, as has been apparent in many ineffective policies around the world. Only 1 in 10 of more than 100,000 research papers on ending hunger, reviewed by international research consortium Ceres 2030 last year, considered gender differences in outcomes.

The Multilateral Organisation Performance Assessment Network (MOPAN)’s 2019 assessment of CGIAR also recommended the integration of gender issues into the design and implementation of research and human rights policies.

CGIAR launched the GENDER Platform and the CGIAR 2030 Research and Innovation Strategy in 2020 and 2021, respectively, to work toward evidence-based design, targeting and implementation of gender policies by the 2030 deadline.

How evidence can support decisions and development outcomes

Decision makers can rely on the following advice, depending on varying degrees of confidence.

- Good practice – “we’ve done it, we like it, and it feels like we make an impact”;

- Promising approaches – some positive findings, but the evaluations are not consistent or rigorous enough to be sure;

- Research-based – the program or practice is based on sound theory informed by a growing body of empirical research; and

- Evidence-based – the program or practice has been rigorously evaluated and has consistently been shown to work.

Incorporating evidence into decision-making helps inform, define and develop the goals of policies and programs and then independently evaluate them once they are implemented. For example, CGIAR research in Uganda generated evidence on the positive results of farmer-to-farmer trainings, which enabled farmers to share knowledge and train other farmers on agricultural innovations; this evidence informed two Ugandan policies governing the national extension system, namely the Extension Guidelines and Standards in 2016 and the National Agriculture Extension Policy in 2019, both issued by the Ministry of Agriculture, Animal Industry and Fisheries.

The good, the bad and the useful

Let’s take a step back. What exactly do we mean by evidence? How is evidence generated? And is there ‘good evidence’ and ‘bad evidence’? How do we choose which evidence to use?

Simply put, evidence is a proof supporting a claim or a belief. The Center for Evidence-Based Management, CEBMA, defines evidence as “information, facts or data supporting (or contradicting) a claim, assumption or hypothesis.”

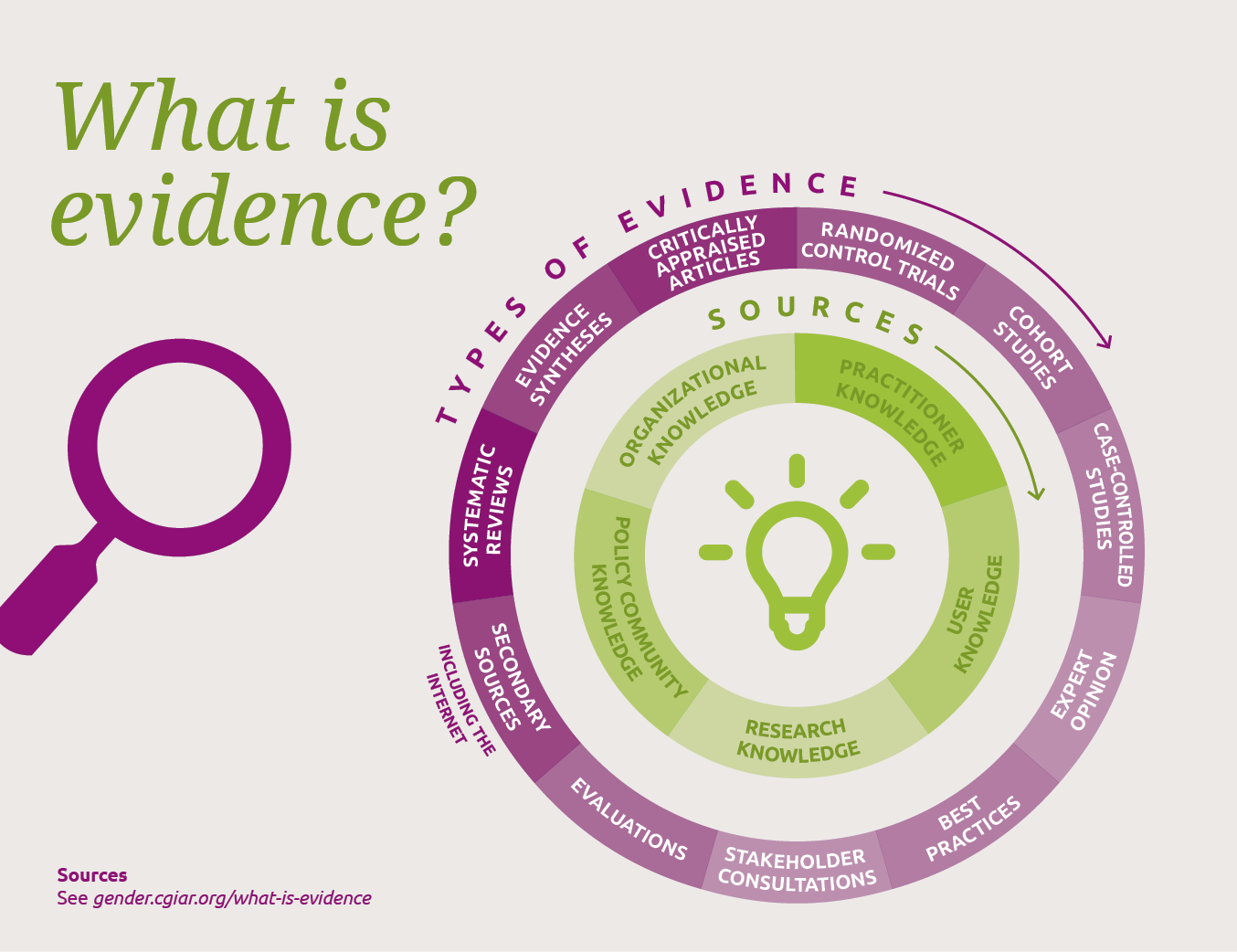

Source: Figure adapted from Pawson (n.d.); Orygen: A quick reference guide to evidence translation: What are sources of evidence?; Perkins, 2010; and R1 Learning: Upon What Evidence Are 'Evidence-Based' Practices Based?

Evidence can come from a variety of sources:

- Organizational knowledge

- Practitioner knowledge

- User knowledge

- Research knowledge

- Policy community knowledge.

Good information is recognized as a foundation for evidence-based policy-making. However, the dynamics of policy-making are influenced by institutional, cultural and professional factors that may not carry scientific rigor. Policymakers thus often rely on narrative evidence for effective policy making.

Learning from the health sector

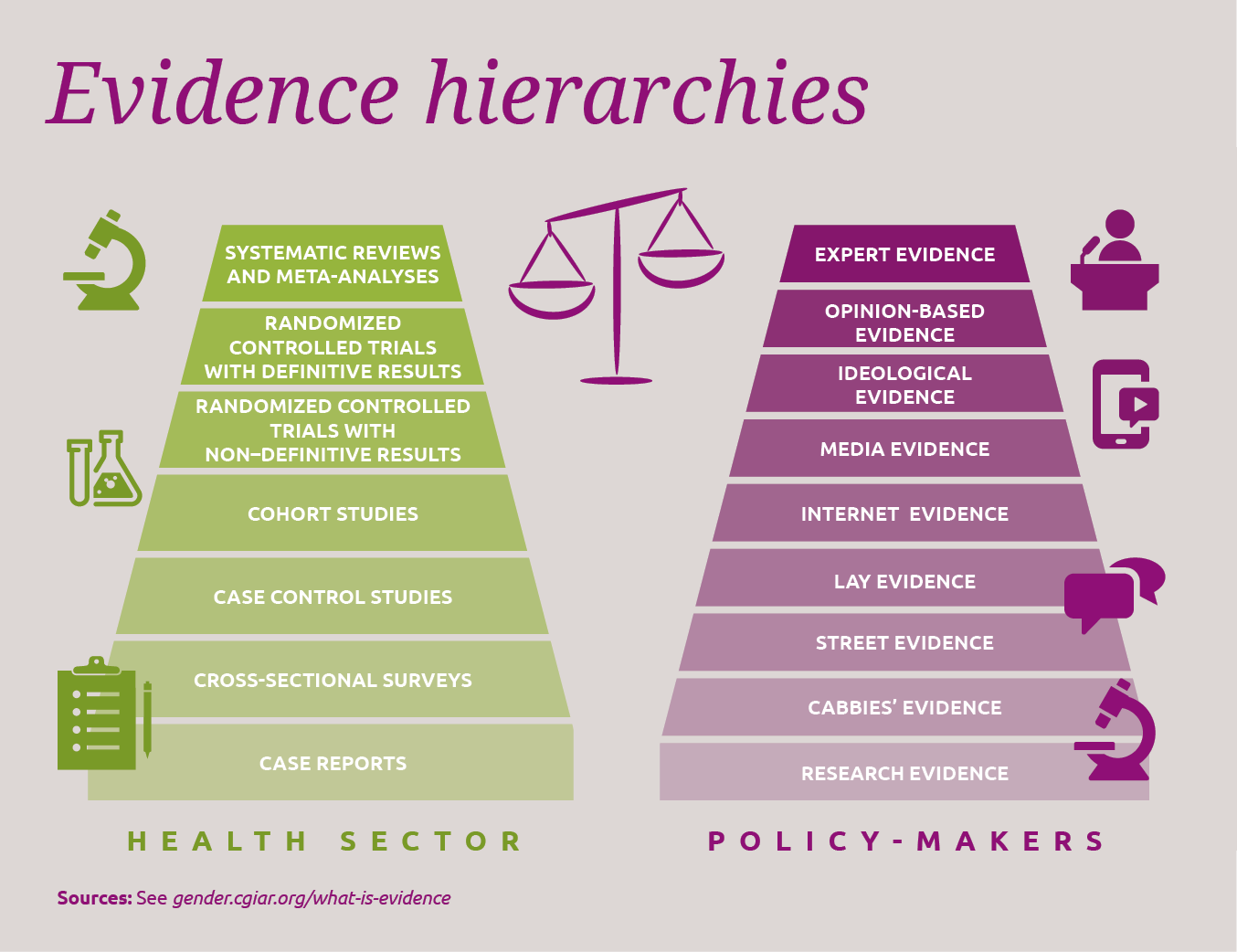

The health sector is considered a pioneer when it comes to using or setting the standards for evidence as bases for clinical decisions. The sector extensively uses hierarchy of evidence to evaluate different pieces of evidence, and attaches a standard to different types of evidence based on the scientific rigor.

Source: Figure adapted from Alliance for Useful Evidence: What counts as good evidence? and Ackley et al. 2007.

These include a) systematic reviews and meta–analyses, b) Randomized Controlled Trials (RCTs) with definitive results, c) RCTs with non–definitive results, d) cohort studies, e) case control studies, f) cross–sectional surveys and g) case reports.

RCTs are regarded as being near the top of the hierarchy and the best methodology to understand causal inferences, but they are not always the answer. For example, a study on the impact of a program targeting women under extreme poverty in Pakistan and India observed how the RCTs used to evaluate the program failed to meet their own criteria for establishing causality and provided very limited explanations for the outcomes observed, resulting in low impact for women.

In other words, hierarchies based on study design tend to underrate the value of good observational studies and pay insufficient attention to the need to understand what works, for whom, in what circumstances and why. That’s why using such hierarchies to exclude all but the highest-ranking studies from consideration can lead to the loss of useful evidence.

The winding road from evidence to policy

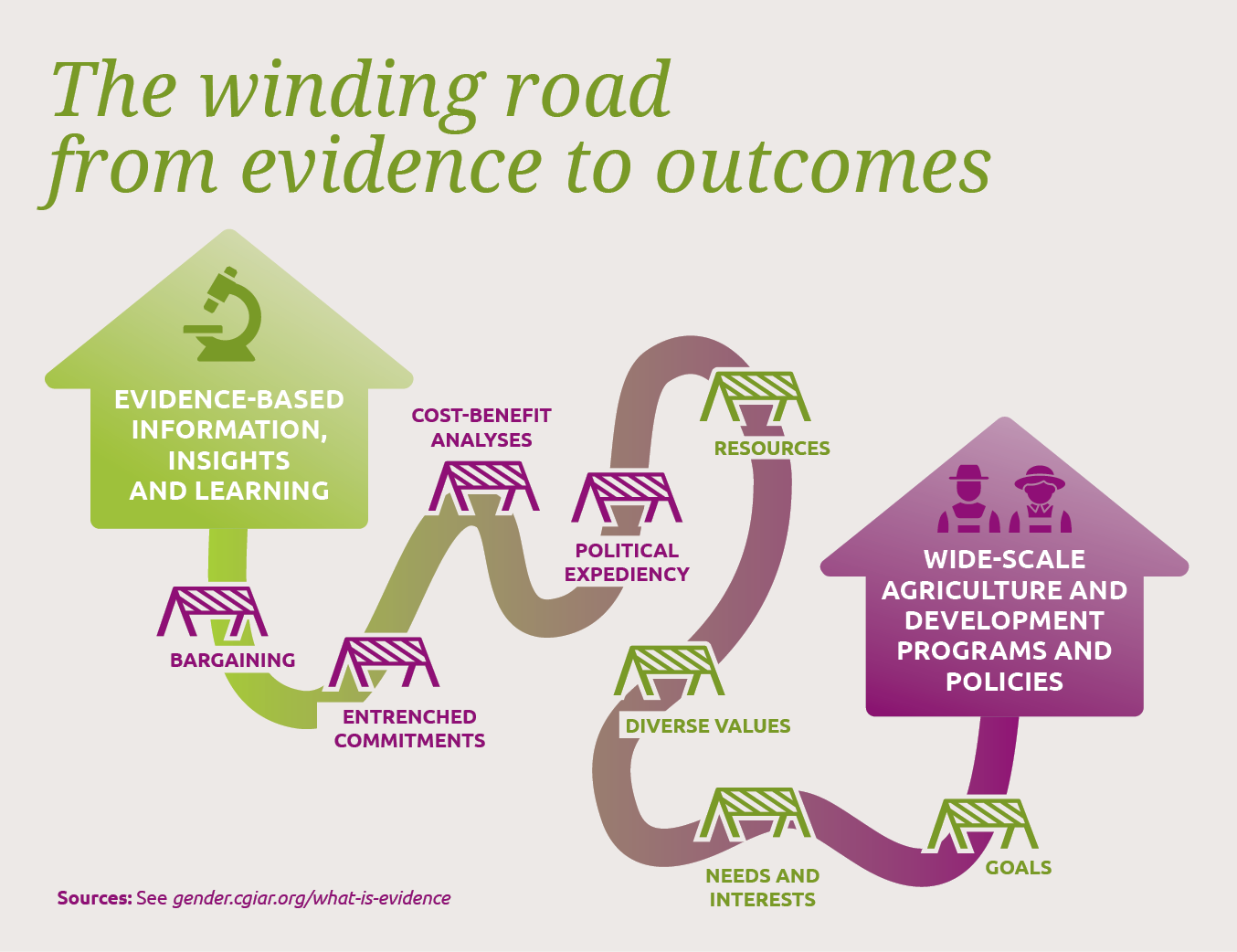

Most decision makers rely on different sources of evidence. Apart from considering the scientific rigor of evidence, they have several other interests, such as cost-benefit analysis, political expediency, context, resources and goals to consider.

Source: Figure adapted from ODI: Impact and Insight: What we know and Head 2017.

There are also practical limitations to political decision-making processes characterized by bargaining, entrenched commitments and the interplay of diverse stakeholder values and interests. Moreover, what constitutes the best-quality evidence varies with the question being asked, which should be aligned with decision makers’ needs and interests. Sometimes, researchers believe that a matrix, instead of a hierarchy, should be used to design a research basis for the research question they address.

One insider’s view of policymakers’ hierarchy of evidence looks as follows:

1. Expert evidence (including consultants and think tanks)

2. Opinion-based evidence (including lobbyists/pressure groups)

3. Ideological evidence (party think tanks, manifestos)

4. Media evidence

5. Internet evidence

6. Lay evidence (constituents’ or citizens’ experiences)

7. Street evidence (urban myths, conventional wisdom)

8. Cabbies’ evidence

9. Research evidence

To maximize the use of evidence in policy, we should therefore consider including forms of evidence that are of greatest utility and relevance for decision-makers. We need to be pragmatic in combining scientific evidence with governance principles and persuasion to translate complex evidence into simple, influential stories.

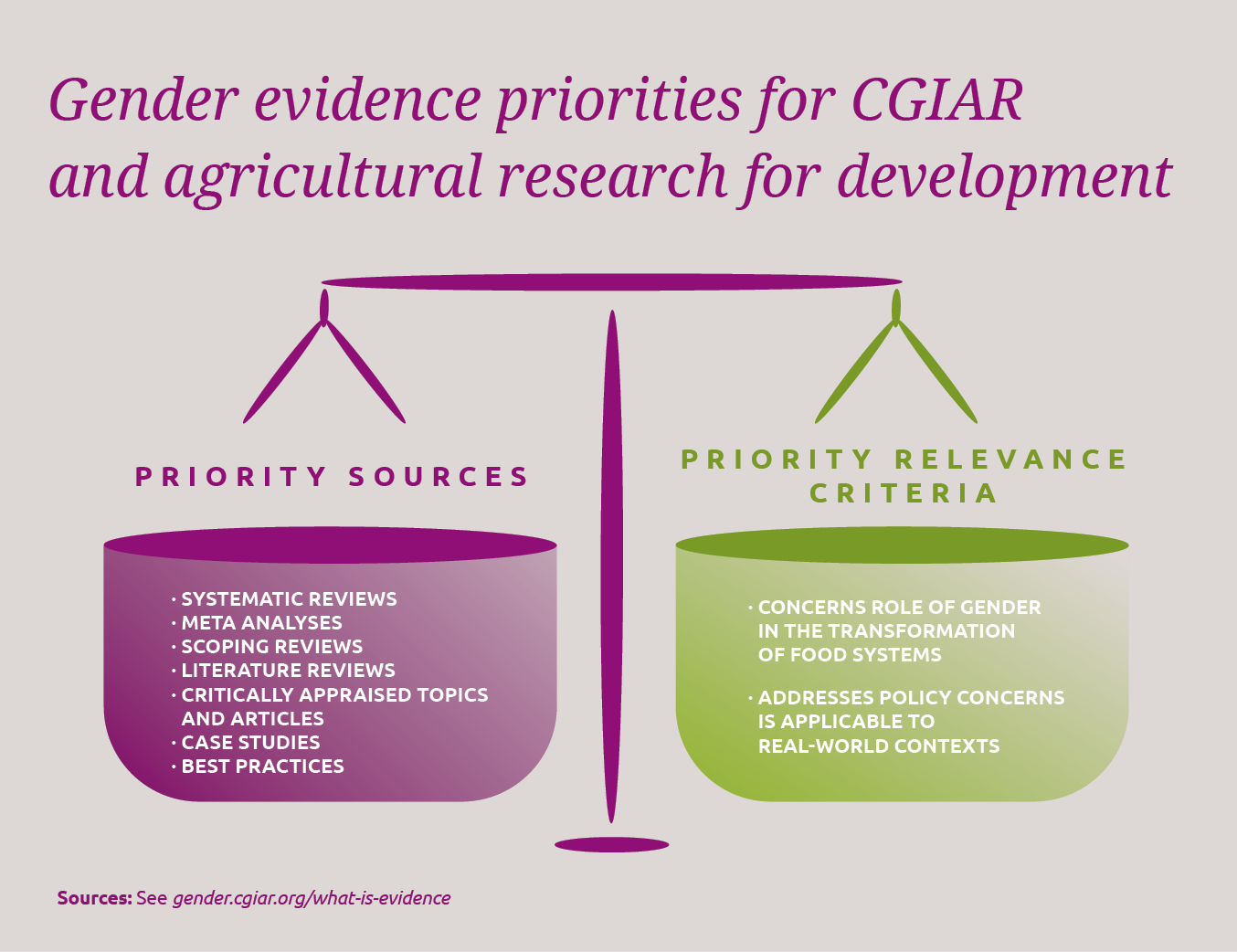

The gender evidence CGIAR needs to pursue

To achieve the outcomes of the CGIAR key impact area on Gender Equality, Youth, and Inclusion will require robust and applicable evidence—evidence that is relevant and can be used in a policy and practice setting. The explanatory power of quantitative evidence can be substantiated with evidence based on grey literature as well as evidence based on participatory research, good practices and case studies.

The famous saying, “one size does not fit all”, seems apt in this context. For gender and social development, unlike healthcare, it is difficult to only apply a standard, experimental design in a multidimensional context. Generating and building a strong evidence base hinges on employing a variety of approaches along a continuum, while ensuring relevance and rigor.

The CGIAR GENDER Platform is generating, synthesizing and communicating relevant evidence from research, using appropriate qualitative and quantitative methods, based on specific research questions. This will enable global critical thinking and debates on relevant themes, prioritizing key research areas for filling evidence gaps within and beyond CGIAR.

In an era of urgent, escalating and diverse development needs to tackle multiple challenges such as climate change, rising poverty and hunger, and pandemics, we need information, insights and learning so that women are not left behind on the path to progress.

CGIAR’s GENDER Platform, guided by the CGIAR 2030 Research and Innovation Strategy, plays a critical role in this effort by ensuring evidence-based gender-transformative approaches, communication and advocacy to positively influence gender outcomes in time for 2030.

References

Fidelity–Adaptation and Sustainability

Perkins, D. (2010) ‘Fidelity–Adaptation and Sustainability.’ Presentation to seminar series on Developing evidence-informed practice for children and young people: the ‘why and the what.’ Organised by the Centre for Effective Services (www.effectiveservices.org) in Dublin, Cork and Galway in October 2010.

Ceres2030: Sustainable Solutions to End Hunger. Summary report.

Laborde, D., Murphy, S., Parent, M., Porciello, J. & Smaller C. (2020). Ceres2030: Sustainable Solutions to End Hunger - Summary Report. Cornell University, IFPRI and IISD.

CGIAR: 2019 Performance Assessment

MOPAN. 2019. CGIAR: 2019 Performance Assessment. www.mopanonline.org.

CGIAR 2030 Research and Innovation Strategy: Transforming food, land, and water systems in a climate crisis

CGIAR System Organization. 2021. CGIAR 2030 Research and Innovation Strategy: Transforming food, land, and water systems in a climate crisis. Montpellier, France: CGIAR System Organization.

Evidence-based Management: The Basic Principles

Barends, E., Rousseau, D.M., & Briner, R.B. (2014). Evidence-based Management: The Basic Principles. Amsterdam: Center for Evidence-Based Management.

Professional policy making for the twenty first century

Strategic Policy Making Team Cabinet Office. 1999. Professional policy making for the twenty first century.

The role of evidence in policy formation and implementation

Office of the Prime Minister’s Science Advisory Committee, New Zealand. 2013. The role of evidence in policy formation and implementation. A report from the Prime Minister’s Chief Science Advisor.

Study findings shape agricultural extension guidelines in Uganda

International Initiative for Impact Evaluation (3ie), 2020. Study findings shape agricultural extension guidelines in Uganda [online summary], Evidence Impact Summaries. New Delhi: 3ie.

Understanding Evidence-Based Public Health Policy

Brownson, R. C., Chriqui, J. F., & Stamatakis, K. A. (2009). Understanding evidence-based public health policy. American journal of public health, 99(9), 1576–1583. https://doi.org/10.2105/AJPH.2008.156224

Evidence-Based Nursing Care Guidelines

Ackley, Betty, Gail Ladwig, Beth Ann Swan and Sharon Tucker. 2007. Evidence-Based Nursing Care Guidelines. Elsevier. ISBN: 9780323059336.

What counts as good evidence?

Nutley, Sandra, Alison Powell and Huw Davies. 2013. What counts as good evidence? Alliance for Useful Evidence.

Randomized Control Trials and Qualitative Evaluations of a Multifaceted Programme for Women in Extreme Poverty: Empirical Findings and Methodological Reflections

Naila Kabeer (2019) Randomized Control Trials and Qualitative Evaluations of a Multifaceted Programme for Women in Extreme Poverty: Empirical Findings and Methodological Reflections, Journal of Human Development and Capabilities, 20:2, 197-217, DOI: 10.1080/19452829.2018.1536696.

Reconsidering evidence-based policy: Key issues and challenges

Brian W. Head (2010) Reconsidering evidence-based policy: Key issues and challenges, Policy and Society, 29:2, 77-94, DOI: 10.1016/j.polsoc.2010.03.001.

Evidence, hierarchies, and typologies: horses for courses

Petticrew, M, and H Roberts. “Evidence, hierarchies, and typologies: horses for courses.” Journal of epidemiology and community health vol. 57,7 (2003): 527-9. doi:10.1136/jech.57.7.527

Assessing the quality of evidence in evidence-based policy: why, how and when?

Pawson, Ray. n.d. Assessing the quality of evidence in evidence-based policy: why, how and when? ESRC Research Methods Programme Working Paper No 1. Leeds, UK: University of Leeds.